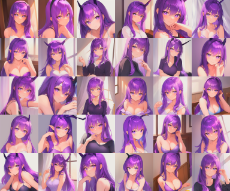

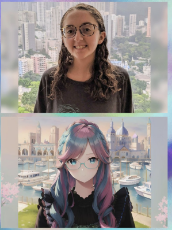

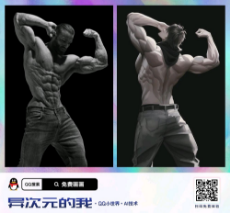

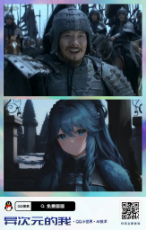

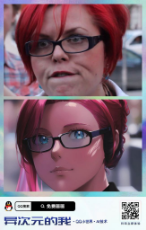

Thought an art thread for AI generated images would be fun. There is a few different AIs now and they are really good.

/cyb/ - Cyberpunk Fiction and Fact

Cyberpunk is the idea that technology will condemn us to a future of totalitarian nightmares here you can discuss recent events and how technology has been used to facilitate greater control by the elites, or works of fiction

466 replies | 449 files | 74 UUIDs | Page 4

266 replies and 234 files omitted.

>>1945

I still think it's fundamentally worse when Art is the target. Forget about employment. If a law somehow managed to end Art as a commodity it wouldn't even matter to me. I've considered Art as the soul and heart of what means to be human for a long time.

How many times have people said corny shit like.

<"Sure, machines are better than humans at a lot of things. But can a machine turn a blank canvas into a masterpiece."

No disrespect for manual labor, a lot of that is also a form of Art in a way. Uncle Adolf said there must be respect between those who work with their mind, and those who work with their body.

The fact AI or machine learning can eventually master every form of human Art might as well be the end of mankind for me. I think many people just forget that AI digital Art, was once as shit as AI writing currently is.

There is nothing that can be done about that. Technology gives and takes. It already killed the mystery behind chess and further diminished the role of creativity.

I still think it's fundamentally worse when Art is the target. Forget about employment. If a law somehow managed to end Art as a commodity it wouldn't even matter to me. I've considered Art as the soul and heart of what means to be human for a long time.

How many times have people said corny shit like.

<"Sure, machines are better than humans at a lot of things. But can a machine turn a blank canvas into a masterpiece."

No disrespect for manual labor, a lot of that is also a form of Art in a way. Uncle Adolf said there must be respect between those who work with their mind, and those who work with their body.

The fact AI or machine learning can eventually master every form of human Art might as well be the end of mankind for me. I think many people just forget that AI digital Art, was once as shit as AI writing currently is.

There is nothing that can be done about that. Technology gives and takes. It already killed the mystery behind chess and further diminished the role of creativity.

>>1950

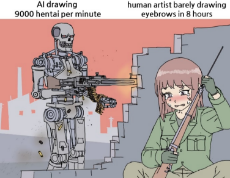

Does AI art really do anything to diminish actual art though? It's not zero sum.

Also, it's worth mentioning that it takes a not-insignificant amount of creativity and persistence to make these images. On top of coming up with a prompt, you have to refresh the program hundreds of times over the course of hours to get satisfying images. That is an expression of the individual, even if it's generated by a machine.

I believe that AI art will usher in a new era of creativity and content/shitposting, where individuals are no longer limited by their personal skill at drawing.

>It already killed the mystery behind chess

Oh yeah. Big RIP for chess players. Even low-power programs are basically unbeatable at this point. It's like trying to arm wrestle the Terminator.

Does AI art really do anything to diminish actual art though? It's not zero sum.

Also, it's worth mentioning that it takes a not-insignificant amount of creativity and persistence to make these images. On top of coming up with a prompt, you have to refresh the program hundreds of times over the course of hours to get satisfying images. That is an expression of the individual, even if it's generated by a machine.

I believe that AI art will usher in a new era of creativity and content/shitposting, where individuals are no longer limited by their personal skill at drawing.

>It already killed the mystery behind chess

Oh yeah. Big RIP for chess players. Even low-power programs are basically unbeatable at this point. It's like trying to arm wrestle the Terminator.

>>1950

>The fact AI or machine learning can eventually master every form of human Art might as well be the end of mankind for me.

Why does it matter though? We live in a nightmarish, dystopian hellhole that's only going to get significantly worse before it might even have a chance of getting better. Who cares?

Also, the human "spirit" isn't going anywhere when it comes to art and artistic expression. People will always find a way to make art in one way or another and AI has no effect on that. Do you really care if the shitty corporate calarts diversity portraits are made by AI?

The reaction to AI art reminds me of when buzzfeed journos were laid off and got told to "learn to code". Poetic justice.

>The fact AI or machine learning can eventually master every form of human Art might as well be the end of mankind for me.

Why does it matter though? We live in a nightmarish, dystopian hellhole that's only going to get significantly worse before it might even have a chance of getting better. Who cares?

Also, the human "spirit" isn't going anywhere when it comes to art and artistic expression. People will always find a way to make art in one way or another and AI has no effect on that. Do you really care if the shitty corporate calarts diversity portraits are made by AI?

The reaction to AI art reminds me of when buzzfeed journos were laid off and got told to "learn to code". Poetic justice.

>>1951

>On top of coming up with a prompt

>I believe that AI art will usher in a new era of creativity and content/shitposting, where individuals are no longer limited by their personal skill at drawing.

I apologize for being unnecessarily rude earlier. But, fuck...

Here we go again, over and over again. Do you seriously can't fucking grasp that AI art will most likely NOT be limited to digital drawing? It's like you didn't even read the fucking post.

>>1952

>Also, the human "spirit" isn't going anywhere when it comes to art and artistic expression. People will always find a way to make art in one way or another and AI has no effect on that.

It was perhaps the greatest stronghold of man against the machine. The one thing that was meant to set us apart. Sure you can still play chess...I don't even thing you get it. That or you're not arguing in good faith.

>The reaction to AI art reminds me of when buzzfeed journos were laid off and got told to "learn to code". Poetic justice.

Well I don't think journalism is a great example of the "human spirit", specially the kind of journalism we are talking about.

>On top of coming up with a prompt

>I believe that AI art will usher in a new era of creativity and content/shitposting, where individuals are no longer limited by their personal skill at drawing.

I apologize for being unnecessarily rude earlier. But, fuck...

Here we go again, over and over again. Do you seriously can't fucking grasp that AI art will most likely NOT be limited to digital drawing? It's like you didn't even read the fucking post.

>>1952

>Also, the human "spirit" isn't going anywhere when it comes to art and artistic expression. People will always find a way to make art in one way or another and AI has no effect on that.

It was perhaps the greatest stronghold of man against the machine. The one thing that was meant to set us apart. Sure you can still play chess...I don't even thing you get it. That or you're not arguing in good faith.

>The reaction to AI art reminds me of when buzzfeed journos were laid off and got told to "learn to code". Poetic justice.

Well I don't think journalism is a great example of the "human spirit", specially the kind of journalism we are talking about.

>>1960

>AI art will most likely NOT be limited to digital drawing?

What's wrong with that? It's more content creation. It's not going prevent people from making their own art without it. It'll just enable people to generate content very quickly. It still requires human input to get ideas in the first place.

>AI art will most likely NOT be limited to digital drawing?

What's wrong with that? It's more content creation. It's not going prevent people from making their own art without it. It'll just enable people to generate content very quickly. It still requires human input to get ideas in the first place.

>>1961

>It still requires human input to get ideas in the first place.

Bait or sheer retardation. That is kind of the gamble one has to make these days.

>It still requires human input to get ideas in the first place.

Bait or sheer retardation. That is kind of the gamble one has to make these days.

Anonymous

No.1963

>>1962

It hasn't gotten to the point of generating completely original ideas that fulfill needs that people didn't even know they had.

It hasn't gotten to the point of generating completely original ideas that fulfill needs that people didn't even know they had.

>>1961

Am gonna try one more time just because people seem to be helplessly clueless everywhere I go, and thus I'm inclined to believe you are being serious.

Picture the following.

AI unironically starts writing novels better than anything man can conjure.

As I implied here >>1950

>I think many people just forget that AI digital Art, was once as shit as AI writing currently is.

Do you seriously thing a prompt is hot shit for that kind of AI?

>It hasn't gotten to the point...

<YET

Am gonna try one more time just because people seem to be helplessly clueless everywhere I go, and thus I'm inclined to believe you are being serious.

Picture the following.

AI unironically starts writing novels better than anything man can conjure.

As I implied here >>1950

>I think many people just forget that AI digital Art, was once as shit as AI writing currently is.

Do you seriously thing a prompt is hot shit for that kind of AI?

>It hasn't gotten to the point...

<YET

Anonymous

No.1966

>>1964

>AI unironically starts writing novels better than anything man can conjure.

Okay, but what's so bad about this? Sounds like it could be really productive.

>AI unironically starts writing novels better than anything man can conjure.

Okay, but what's so bad about this? Sounds like it could be really productive.

Anonymous

No.1967

Yeah, I figured it was bait. Great venting in the end, have a nice day lads.

1665802848.jpg (27.5 KB, 720x720, 77a7b0c42e547fd2ec093fc913a66f2d.jpg)

>>1960

>It was perhaps the greatest stronghold of man against the machine.

Machines are made by people and I'd consider them an extension of humanity in a way. There is no man vs dichotomy as far as I'm concerned. One should aspire to harness the glory of a big block V8 powering a computer that generates ultra realistic images of pony pussy while running over niggers.

>I don't even thing you get it. That or you're not arguing in good faith.

Reevaluate.

>It was perhaps the greatest stronghold of man against the machine.

Machines are made by people and I'd consider them an extension of humanity in a way. There is no man vs dichotomy as far as I'm concerned. One should aspire to harness the glory of a big block V8 powering a computer that generates ultra realistic images of pony pussy while running over niggers.

>I don't even thing you get it. That or you're not arguing in good faith.

Reevaluate.

Anonymous

No.1973

1665805172_1.png (1.3 MB, 1080x1068, Screenshot_20221014-233737.png)

1665805172_2.png (1.0 MB, 1072x1080, Screenshot_20221014-233751.png)

1665805172_3.png (1.6 MB, 1079x1331, Screenshot_20221014-233811.png)

1665805172_4.png (669.4 KB, 1080x722, Screenshot_20221014-233825.png)

1665805172_5.png (1.0 MB, 1079x1084, Screenshot_20221014-233857.png)

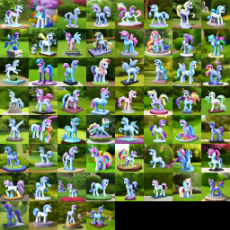

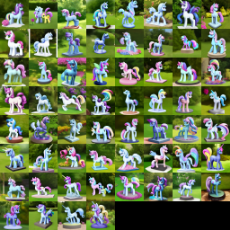

So many cute poners

Anonymous

No.1974

>>1972

Cheer up. Try considering the pros of AI advancing. Imagine no longer being beholden to artists to get your fix of mares.

Cheer up. Try considering the pros of AI advancing. Imagine no longer being beholden to artists to get your fix of mares.

Anonymous

No.1980

1666034135.mp4 (1.1 MB, Resolution:640x640 Length:00:00:18, randomly_generated.mp4) [play once] [loop]

Anonymous

No.1989

>>1985

>>1987

I should make the first randomly generated fire emblem game.

the music? AI generated. the plot? AI generated. every character? every level? AI generated. Fuck trying to write anything important with deep themes. I should start with the default template people expect from FE and then fill it with AI generated content.

>>1987

I should make the first randomly generated fire emblem game.

the music? AI generated. the plot? AI generated. every character? every level? AI generated. Fuck trying to write anything important with deep themes. I should start with the default template people expect from FE and then fill it with AI generated content.

Anonymous

No.1991

Anonymous

No.2015

1666451824_1.png (401.4 KB, 512x512, unknown - 2022-10-22T105506.375.png)

1666451824_2.png (445.6 KB, 512x512, unknown - 2022-10-22T105514.367.png)

1666451824_3.png (414.4 KB, 512x512, unknown - 2022-10-22T105520.550.png)

1666451824_4.png (457.1 KB, 512x512, unknown - 2022-10-22T105531.117.png)

1666451824_5.png (420.4 KB, 512x512, unknown - 2022-10-22T105541.887.png)

1666451848_1.png (426.3 KB, 512x512, unknown - 2022-10-22T105553.631.png)

1666451848_2.png (461.0 KB, 512x512, unknown - 2022-10-22T105605.946.png)

1666451848_3.png (419.7 KB, 512x512, unknown - 2022-10-22T105617.253.png)

1666451848_4.png (433.2 KB, 512x512, unknown - 2022-10-22T105629.235.png)

1666451848_5.png (417.1 KB, 512x512, unknown - 2022-10-22T105640.843.png)

.

1666451875_1.png (426.9 KB, 512x512, unknown - 2022-10-22T105652.598.png)

1666451875_2.png (418.4 KB, 512x512, unknown - 2022-10-22T105704.178.png)

1666451875_3.png (429.8 KB, 512x512, unknown - 2022-10-22T105715.035.png)

1666451875_4.png (410.8 KB, 512x512, unknown - 2022-10-22T105726.191.png)

1666451875_5.png (427.9 KB, 512x512, unknown - 2022-10-22T105738.815.png)

1666451896_1.png (404.3 KB, 512x512, unknown - 2022-10-22T105751.693.png)

1666451896_2.png (451.0 KB, 512x512, unknown - 2022-10-22T105805.616.png)

1666451896_3.png (397.0 KB, 512x512, unknown - 2022-10-22T105819.150.png)

.

>>2021

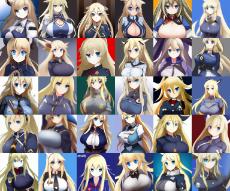

I see hundreds of pics of this quality on discord every day. I just only bothered dumping some now.

I see hundreds of pics of this quality on discord every day. I just only bothered dumping some now.

Anonymous

No.2025

>>2024

Program: automatic1111

Model: Astralites Ponyv1

Sampler: Euler a

Prompt: detailed fluffy fur, unicorn, (by taran fiddler and chunie , Michael & Inessa Garmash, Ruan Jia, Pino Daeni), volumetric lighting, ambient bloom, 4K, ((((solo)))), (((golden medieval full armor))), (golden full muzzle helmet with metal ears), (face covered), ((Fire for hair)), holy light

Program: automatic1111

Model: Astralites Ponyv1

Sampler: Euler a

Prompt: detailed fluffy fur, unicorn, (by taran fiddler and chunie , Michael & Inessa Garmash, Ruan Jia, Pino Daeni), volumetric lighting, ambient bloom, 4K, ((((solo)))), (((golden medieval full armor))), (golden full muzzle helmet with metal ears), (face covered), ((Fire for hair)), holy light

>>2026

Where can I get the Astralites Ponyv1 model?

I tried that prompt without the model and got this.

Where can I get the Astralites Ponyv1 model?

I tried that prompt without the model and got this.

>>2027

Tested and loos like this is the model.

https://huggingface.co/AstraliteHeart/pony-diffusion

https://mega.nz/file/ZT1xEKgC#Xxir5udMmU_mKaRZAbBkF247Yk7DqCr01V0pDzSlYI0

Tested and loos like this is the model.

https://huggingface.co/AstraliteHeart/pony-diffusion

https://mega.nz/file/ZT1xEKgC#Xxir5udMmU_mKaRZAbBkF247Yk7DqCr01V0pDzSlYI0

>>2030

How does the model make art that good when most fandom art is show tracing- I mean vector art?

How does the model make art that good when most fandom art is show tracing- I mean vector art?

Anonymous

No.2032

>>2031

I think that is one of the wonders of Neural Networks. No one knows exactly why it works so good as it does only that it works. But it is hit and misses in depending on seed number.

I think that is one of the wonders of Neural Networks. No one knows exactly why it works so good as it does only that it works. But it is hit and misses in depending on seed number.

Anonymous

No.2033

>>2030

In case people want to know. For that image (that looked best so far and first I ran) used the Prompt by >>2026

>detailed fluffy fur, unicorn, (by taran fiddler and chunie , Michael & Inessa Garmash, Ruan Jia, Pino Daeni), volumetric lighting, ambient bloom, 4K, ((((solo)))), (((golden medieval full armor))), (golden full muzzle helmet with metal ears), (face covered), ((Fire for hair)), holy light

>Steps: 20, Sampler: Euler a, CFG scale: 7, Seed: 3081589709, Size: 512x512

In case people want to know. For that image (that looked best so far and first I ran) used the Prompt by >>2026

>detailed fluffy fur, unicorn, (by taran fiddler and chunie , Michael & Inessa Garmash, Ruan Jia, Pino Daeni), volumetric lighting, ambient bloom, 4K, ((((solo)))), (((golden medieval full armor))), (golden full muzzle helmet with metal ears), (face covered), ((Fire for hair)), holy light

>Steps: 20, Sampler: Euler a, CFG scale: 7, Seed: 3081589709, Size: 512x512

Anonymous

No.2035

>>2034

I've heard of training girls, but this is ridiculous!

What is this, the next Kantai Collection spinoff?

I've heard of training girls, but this is ridiculous!

What is this, the next Kantai Collection spinoff?

>>2034

>trains and anime

Add ponies to the equation and we'd have enough autism to go to Mars and back.

>trains and anime

Add ponies to the equation and we'd have enough autism to go to Mars and back.

Anonymous

No.2038

>>2037

And Sonic. Autists love Sonic for some reason.

Maybe they wish they were Sonic? Maybe Tails the shy small genius child who wants to be cool like Sonic appeals to them? Maybe they just like fast things.

And Sonic. Autists love Sonic for some reason.

Maybe they wish they were Sonic? Maybe Tails the shy small genius child who wants to be cool like Sonic appeals to them? Maybe they just like fast things.

Anonymous

No.2045

>>2039

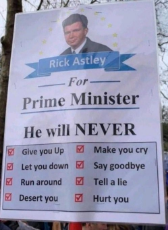

Videos like this are further proof that AI generated images doesn't "hurt art", but expands it. The concept of art is being democratized, so that even random people who have no talent for drawing or no time to paint can realize their creative ambitions and silly ideas.

It's said that beauty is in the eye of the beholder. A machine may be able to make immaculate images, but only a human can appreciate them. Likewise, only a human would have thought to use a machine to compile a bunch of anime girls into the Rick Rolled video, and AI helped them do it.

Videos like this are further proof that AI generated images doesn't "hurt art", but expands it. The concept of art is being democratized, so that even random people who have no talent for drawing or no time to paint can realize their creative ambitions and silly ideas.

It's said that beauty is in the eye of the beholder. A machine may be able to make immaculate images, but only a human can appreciate them. Likewise, only a human would have thought to use a machine to compile a bunch of anime girls into the Rick Rolled video, and AI helped them do it.

>>2052

Art has always been abundant. There's trillions of artworks out there. The fact that unpracticed artists can create their own images with memes just adds to the sphere.

Art didn't suddenly get worse once acrylic paints enabled artists to buy their paint instead of mixing it from the elements.

Art has always been abundant. There's trillions of artworks out there. The fact that unpracticed artists can create their own images with memes just adds to the sphere.

Art didn't suddenly get worse once acrylic paints enabled artists to buy their paint instead of mixing it from the elements.

>>2052

That's only for market value, which is irrelevant. Art's value is subjective.

Just look at the boorus and see how there's tens of thousands of lewd pics of the mane six, but people still get excited when new

That's only for market value, which is irrelevant. Art's value is subjective.

Just look at the boorus and see how there's tens of thousands of lewd pics of the mane six, but people still get excited when new

>>2053

There aren't. Painting well is hard

>>2054

All value is subjective. That value is based on scarcity. If you have infinite art then all of it becomes boring

There's lots of pics but a tiny fraction of them actually appeal to you. If you can press a button to get a new one perfectly suited to you, you'll get bored of it

EVERYTHING becomes boring if you have an endless supply of it

There aren't. Painting well is hard

>>2054

All value is subjective. That value is based on scarcity. If you have infinite art then all of it becomes boring

There's lots of pics but a tiny fraction of them actually appeal to you. If you can press a button to get a new one perfectly suited to you, you'll get bored of it

EVERYTHING becomes boring if you have an endless supply of it

Anonymous

No.2056

>>2055

>If you have infinite art then all of it becomes boring

I've seen thousands of conventional pony artworks and thousands of AI artworks in the past couple weeks alone, but I'm not bored at all.

>That value is based on scarcity.

I beg to differ. When it comes to art, the more the merrier.

>If you can press a button to get a new one perfectly suited to you, you'll get bored of it

If I became bored of it, it must have not suited me perfectly.

>EVERYTHING becomes boring if you have an endless supply of it

I already have a virtually endless supply of mare porn for free, and I'm not even close to bored of it yet.

>If you have infinite art then all of it becomes boring

I've seen thousands of conventional pony artworks and thousands of AI artworks in the past couple weeks alone, but I'm not bored at all.

>That value is based on scarcity.

I beg to differ. When it comes to art, the more the merrier.

>If you can press a button to get a new one perfectly suited to you, you'll get bored of it

If I became bored of it, it must have not suited me perfectly.

>EVERYTHING becomes boring if you have an endless supply of it

I already have a virtually endless supply of mare porn for free, and I'm not even close to bored of it yet.

>>2052

Things can have inherent value unrelated to their scarcity and how desired they are.

Incredibly rare randomly generated NFTs unlikely to exist don't have any intrinsic value granted by their rarity.

Things can have inherent value unrelated to their scarcity and how desired they are.

Incredibly rare randomly generated NFTs unlikely to exist don't have any intrinsic value granted by their rarity.

Anonymous

No.2058

>>2057

>Incredibly rare randomly generated NFTs unlikely to exist don't have any intrinsic value granted by their rarity.

This, regardless of how scarce something is, it's true value is based on one of two things:

1. How useful it is to people

2. It's subjective, sentimental value

AI art is useful, and also enables people to generate artworks that meet their own subjective tastes.

>Incredibly rare randomly generated NFTs unlikely to exist don't have any intrinsic value granted by their rarity.

This, regardless of how scarce something is, it's true value is based on one of two things:

1. How useful it is to people

2. It's subjective, sentimental value

AI art is useful, and also enables people to generate artworks that meet their own subjective tastes.

Anonymous

No.2059

1666748761.png (663.5 KB, 512x512, unknown - 2022-10-25T214532.612.png)

1666760357.mp4 (11.8 MB, Resolution:572x382 Length:00:03:47, bad_apple_except_ai_generated.mp4) [play once] [loop]

Anonymous

No.2065

1666793492.png (716.9 KB, 512x512, unknown - 2022-10-26T101036.639.png)

Anonymous

No.2080

1666877046_1.png (420.4 KB, 512x512, unknown - 2022-10-27T092309.707.png)

1666877046_2.png (478.4 KB, 512x512, unknown - 2022-10-27T092322.956.png)

1666877046_3.png (445.7 KB, 512x512, unknown - 2022-10-27T092334.932.png)

1667218445.png (482.6 KB, 512x512, unknown - 2022-10-31T081336.846.png)

Does anyone want a grid of 35-50 images made with your chosen prompt? My PC is pretty powerful.

>>2160

Ooooooo. I can try to think of something but from my testing crafting an effective promt is a science in itself so I am not sure what a good promt would be (my testing on my slow computer have not been too successful).

But if you can try to make some statues like in >>2115 (pic 3) it would be awesome. I think they look awesome.

Ooooooo. I can try to think of something but from my testing crafting an effective promt is a science in itself so I am not sure what a good promt would be (my testing on my slow computer have not been too successful).

But if you can try to make some statues like in >>2115 (pic 3) it would be awesome. I think they look awesome.

>>2162

I'll need the prompt and model used for those pics. If I just said "(My Little Pony) garden statue" with the default model that came with the AI when I downloaded it I doubt I'll get good results, but I'll give it a go.

By the way, what does "Tiling" do? Whenever I check that box it just makes the output look inferior. Is there some purpose to it I'm missing?

I'll need the prompt and model used for those pics. If I just said "(My Little Pony) garden statue" with the default model that came with the AI when I downloaded it I doubt I'll get good results, but I'll give it a go.

By the way, what does "Tiling" do? Whenever I check that box it just makes the output look inferior. Is there some purpose to it I'm missing?

>>2163

The one I had limited success with (but takes 2-3 minutes per image so I was not able to do to much) was:

>pony statue, majestic, alicorn, 4k hi-res, detailed, granite, cracked surface, weathered

>Steps: 20, Sampler: Euler a, CFG scale: 7, Seed: 174254891, Size: 512x512

The one I had limited success with (but takes 2-3 minutes per image so I was not able to do to much) was:

>pony statue, majestic, alicorn, 4k hi-res, detailed, granite, cracked surface, weathered

>Steps: 20, Sampler: Euler a, CFG scale: 7, Seed: 174254891, Size: 512x512

>>2164

2-3 minutes per image? That must suck. How many images can you generate at once before your PC runs out of memory?

One time I left my room with an autoclicker generating more sets of images every minute. By the time I got back, I had a few thousand.

2-3 minutes per image? That must suck. How many images can you generate at once before your PC runs out of memory?

One time I left my room with an autoclicker generating more sets of images every minute. By the time I got back, I had a few thousand.

>>2166

>How many images can you generate at once before your PC runs out of memory?

I can only run one at a time, and at times have to close other apps that uses memory in order for it to run.

Are you using the Stable Diffusion Model or Waifu model instead of the Pony model? The Pony Model is good and you should try it

https://huggingface.co/AstraliteHeart/pony-diffusion

https://mega.nz/file/ZT1xEKgC#Xxir5udMmU_mKaRZAbBkF247Yk7DqCr01V0pDzSlYI0

>How many images can you generate at once before your PC runs out of memory?

I can only run one at a time, and at times have to close other apps that uses memory in order for it to run.

Are you using the Stable Diffusion Model or Waifu model instead of the Pony model? The Pony Model is good and you should try it

https://huggingface.co/AstraliteHeart/pony-diffusion

https://mega.nz/file/ZT1xEKgC#Xxir5udMmU_mKaRZAbBkF247Yk7DqCr01V0pDzSlYI0

>>2167

Pony model loaded.

Here are some new AI generated pony statues, image sets 3 and 4 were made with the seed you posted instead of a random seed.

Had to split them up because trying to post the next 4 intact broke some kind of limit. "Request Entity too large".

Pony model loaded.

Here are some new AI generated pony statues, image sets 3 and 4 were made with the seed you posted instead of a random seed.

Had to split them up because trying to post the next 4 intact broke some kind of limit. "Request Entity too large".

Anonymous

No.2173

>>2172

Strange that the first image in the set using same seed and prompt didn't yield the same image as I got as it should have done that. Subsequent images when given a seed should give previous_seed+1 when running batches.

Strange that the first image in the set using same seed and prompt didn't yield the same image as I got as it should have done that. Subsequent images when given a seed should give previous_seed+1 when running batches.

>>2176

Strange. For reference the arguments I use to start Stable Diffusion is:

>--ckpt pony_sfw_80k_safe_and_suggestive_500rating_plus-pruned.ckpt --lowvram --opt-split-attention

Other than that I have the standard settings (no scripts or face fixes or anything) and standard txt2img

Strange. For reference the arguments I use to start Stable Diffusion is:

>--ckpt pony_sfw_80k_safe_and_suggestive_500rating_plus-pruned.ckpt --lowvram --opt-split-attention

Other than that I have the standard settings (no scripts or face fixes or anything) and standard txt2img

>>2177

>lowvram --opt-split-attention

I was using those exact settings except for that part. They just reduce the load on your computer and have no effect on the resulting image, right?

>lowvram --opt-split-attention

I was using those exact settings except for that part. They just reduce the load on your computer and have no effect on the resulting image, right?

>>2178

They should just reduce the memory used and not change what is produced. Just posted full list of arguments in case I'm wrong on that. It was just strange that you didn't get the same image using same prompt and seed.

They should just reduce the memory used and not change what is produced. Just posted full list of arguments in case I'm wrong on that. It was just strange that you didn't get the same image using same prompt and seed.

>>2179

>It was just strange that you didn't get the same image using same prompt and seed.

You see, these AI's no longer "mash" images together with the hope of producing a coherent pic.

They basically emulate human creativity.

Artists need external inputs as well. They consciously and unconsciously absorb bits and pieces from every other artwork they may have seen before. Sometimes it may even be apparently unrelated life experiences.

Regardless, when artists draw inspiration from other art sources. They are pretty much doing the same thing that AI's do.

It's impossible to trace back the source pics. What AI's make is about as original as what any human could've conceived.

It makes sense you don't always get the same results, even when all things are equal.

>It was just strange that you didn't get the same image using same prompt and seed.

You see, these AI's no longer "mash" images together with the hope of producing a coherent pic.

They basically emulate human creativity.

Artists need external inputs as well. They consciously and unconsciously absorb bits and pieces from every other artwork they may have seen before. Sometimes it may even be apparently unrelated life experiences.

Regardless, when artists draw inspiration from other art sources. They are pretty much doing the same thing that AI's do.

It's impossible to trace back the source pics. What AI's make is about as original as what any human could've conceived.

It makes sense you don't always get the same results, even when all things are equal.

Anonymous

No.2183

>>2182

>It makes sense you don't always get the same results, even when all things are equal.

Running same seed and prompt I get same image every time. Also running others prompt and seeds (I seen people post) I get same image they get when using the same model they used.

>It makes sense you don't always get the same results, even when all things are equal.

Running same seed and prompt I get same image every time. Also running others prompt and seeds (I seen people post) I get same image they get when using the same model they used.

Anonymous

No.2184

>>2182

I don't think the AI is updating its own model with data from the pictures it generates.

I hope it isn't. I don't want my AI to give itself dementia by using its garbled output as new input.

By now its garbled output probably outnumbers the number of images it was trained on.

I don't think the AI is updating its own model with data from the pictures it generates.

I hope it isn't. I don't want my AI to give itself dementia by using its garbled output as new input.

By now its garbled output probably outnumbers the number of images it was trained on.

Anonymous

No.2190

1667953434.mp4 (4.9 MB, Resolution:576x1024 Length:00:00:41, video_2022-11-07_12-36-29.mp4) [play once] [loop]

Anonymous

No.2193

Does anyone have any good images? I tried doing a cursory search on ponerpics and the first few pages were horrid dogshit.

The era of abstract merchants has reached a new golden age.

Merchants are being produced faster than the ADL could ever hope to flag them.

Merchants are being produced faster than the ADL could ever hope to flag them.

Anonymous

No.2236

Anonymous

No.2237

>>2235

Idk. I got them from Discord.

If you find out any methods, do share. I want to mass produce merchants.

Idk. I got them from Discord.

If you find out any methods, do share. I want to mass produce merchants.

Anonymous

No.2245

1668994325_1.png (722.5 KB, 1280x768, 00036-1416231330-green_eyes_s.png)

1668994325_2.png (281.3 KB, 640x384, 00033-1191004003-green_eyes_s.png)

Anonymous

No.2246

1668994653_1.png (1.1 MB, 1280x768, 00050-4283156501-watercolor.png)

1668994653_2.png (334.4 KB, 512x512, 00008-3025926800-safe_hioshir.png)

1668994653_3.png (319.5 KB, 512x512, 00019-2923236971-t-hoodie_AND_.png)

1668994653_4.png (289.2 KB, 512x512, 00002-3251689543-by_lumineko_A.png)

Pixiv has lost its collective mind recently (more than before).

It's impossible to use the site without being recommended pics of [i]pregnant toddlers!

It's impossible to use the site without being recommended pics of [i]pregnant toddlers!

Anonymous

No.2258

1669184042.png (11.3 MB, 3072x2560, Twilight Sparkle supposedly.png)

I got the new Everything V3 model.

It seems more varied than the previous smaller Waifu model I was using.

But it also loves to wash out the colours of anything I make with it. So I often have to saturate this desaturated art output until it looks right.

It seems more varied than the previous smaller Waifu model I was using.

But it also loves to wash out the colours of anything I make with it. So I often have to saturate this desaturated art output until it looks right.

"Twilight Sparkle"

>>2273

Nice. Not knowing prompt but adding "(unicorn)" to prompt and "(text), devil" to negative prompt might fix some of the devil horns and and text. Love how AI art is evolving with better and better models.

Nice. Not knowing prompt but adding "(unicorn)" to prompt and "(text), devil" to negative prompt might fix some of the devil horns and and text. Love how AI art is evolving with better and better models.

Anonymous

No.2276

>>2274

Speaking of AI evolution how do I update my installation of Stable Diffusion? I heard the new version has no limit on text input and is better at drawing hands.

Speaking of AI evolution how do I update my installation of Stable Diffusion? I heard the new version has no limit on text input and is better at drawing hands.

Anonymous

No.2278

>>2277

The way I do it is that I used git to get the initial code and run an git update in the bat file used to start SD.

So initially do an

>git clone https://github.com/AUTOMATIC1111/stable-diffusion-webui

Then in bat file I do

>...

>set COMMANDLINE_ARGS=--ckpt pony_sfw_80k_safe_and_suggestive_500rating_plus-pruned.ckpt --lowvram --opt-split-attention

>

>cd stable-diffusion-webui

>git pull

>call webui.bat

>cd ..

The way I do it is that I used git to get the initial code and run an git update in the bat file used to start SD.

So initially do an

>git clone https://github.com/AUTOMATIC1111/stable-diffusion-webui

Then in bat file I do

>...

>set COMMANDLINE_ARGS=--ckpt pony_sfw_80k_safe_and_suggestive_500rating_plus-pruned.ckpt --lowvram --opt-split-attention

>

>cd stable-diffusion-webui

>git pull

>call webui.bat

>cd ..

Wtf is he doing to edit the AI generated art and get new ones like that? [YouTube] Waifu Diffusion AI speed paint![]()

>>2281

I assumed he did, but looked at description and he is using the Krita plugin for Stable Diffusion that runs an img2img with prompt. It can take basic input and you have to tell it what it should draw and weight how much it should resemble the input image. I am not fully sure how the weights should be but I think the Krita plugin is fairly easy to use.

First get Krita https://krita.org/

Then install the Krita plugin https://github.com/sddebz/stable-diffusion-krita-plugin

You can do the same in Stable Diffusion webui directly by going to the img2img tab.

I assumed he did, but looked at description and he is using the Krita plugin for Stable Diffusion that runs an img2img with prompt. It can take basic input and you have to tell it what it should draw and weight how much it should resemble the input image. I am not fully sure how the weights should be but I think the Krita plugin is fairly easy to use.

First get Krita https://krita.org/

Then install the Krita plugin https://github.com/sddebz/stable-diffusion-krita-plugin

You can do the same in Stable Diffusion webui directly by going to the img2img tab.

>>2283

Have you tried using that setting where your generator makes the image tiny and then scales it up?

Have you tried using that setting where your generator makes the image tiny and then scales it up?

>>2287

I have not tried upscaling or any of the fancy options as the biggest I can generate is 512x512 and even that is pushing my card to the limits. And it takes a couple of minutes to generate an image. So experimenting with settings and what it does is an exercise in patience and remembering what I did half an hour ago and if my changes actually had an impact.

I have not tried upscaling or any of the fancy options as the biggest I can generate is 512x512 and even that is pushing my card to the limits. And it takes a couple of minutes to generate an image. So experimenting with settings and what it does is an exercise in patience and remembering what I did half an hour ago and if my changes actually had an impact.

>>2288

Damn, that sucks. Back when I had to make do with a piece of shit laptop that would take multiple minutes to boot up and do basic tasks like open the file explorer or open a webpage, I ran Fallout New Vegas at barely 10 frames a second even with the lowest graphics settings and as few mods as possible. The game was borderline unplayable especially when combat started so I had to rely on companions basically doing all combat for me. The lag didn't fuck their attacks up as much. Even the fucking word processor lagged with that thing. A word processor!

Damn, that sucks. Back when I had to make do with a piece of shit laptop that would take multiple minutes to boot up and do basic tasks like open the file explorer or open a webpage, I ran Fallout New Vegas at barely 10 frames a second even with the lowest graphics settings and as few mods as possible. The game was borderline unplayable especially when combat started so I had to rely on companions basically doing all combat for me. The lag didn't fuck their attacks up as much. Even the fucking word processor lagged with that thing. A word processor!

Does anyone actually believe GPU prices are dropping down anytime soon?

>>2288

Specs?

>>2291

Lmao

My trashtop struggles to run Halo 2 on anything but low settings.

Android-x86 is game-changing tho. if your phone is old and shitty like mine.

I couldn't have possibly run the PC version of Honkai on that thing.

>>2288

Specs?

>>2291

Lmao

My trashtop struggles to run Halo 2 on anything but low settings.

Android-x86 is game-changing tho. if your phone is old and shitty like mine.

I couldn't have possibly run the PC version of Honkai on that thing.

>>2292

>Does anyone actually believe GPU prices are dropping down anytime soon?

There was talk that GPU prices could drop when Etherium did their change and no longer required top end GPU to mine coins (or something like that). But I assume the Manufacturers/Shops have gotten used to getting paid top dollars so sadly it might take a good while before it drops.

>Specs?

My GPU is an GTX 1060 3GB so it is on the low end to be able to run it at all.

>Does anyone actually believe GPU prices are dropping down anytime soon?

There was talk that GPU prices could drop when Etherium did their change and no longer required top end GPU to mine coins (or something like that). But I assume the Manufacturers/Shops have gotten used to getting paid top dollars so sadly it might take a good while before it drops.

>Specs?

My GPU is an GTX 1060 3GB so it is on the low end to be able to run it at all.

1669930434.jpg (61.4 KB, 500x500, artworks-tqvum3VtN9KrP2rh-5O9Fyw-t500x500.jpg)

>>2293

>But I assume the Manufacturers/Shops have gotten used to getting paid top dollars

I guess so. I remember some Anons warning about this before.

>GTX 1060 3GB

>Low-end

Welp, I guess I shouldn't even bother.

>But I assume the Manufacturers/Shops have gotten used to getting paid top dollars

I guess so. I remember some Anons warning about this before.

>GTX 1060 3GB

>Low-end

Welp, I guess I shouldn't even bother.

Anonymous

No.2295

>>2294

>Welp, I guess I shouldn't even bother.

I think minimum recommended is 4GB card and 3GB is lowest that they had been able to run it on. Still the AI is able to run on consumer grade GPU compared to others that need 40GB+ cards to run so it is much better than what it could have been. But been a while since I read the FAQ and could be they have managed to lower the spec needed (looks like 2GB vram is lowest now) People have been able to run it on CPU only but significantly slower but it is possibilities.

>Nvidia guide: https://rentry.org/voldy

>CPU guide: https://rentry.org/cputard

>AMD guide: https://rentry.org/sdamd

(think this is still the relevant guides)

>Welp, I guess I shouldn't even bother.

I think minimum recommended is 4GB card and 3GB is lowest that they had been able to run it on. Still the AI is able to run on consumer grade GPU compared to others that need 40GB+ cards to run so it is much better than what it could have been. But been a while since I read the FAQ and could be they have managed to lower the spec needed (looks like 2GB vram is lowest now) People have been able to run it on CPU only but significantly slower but it is possibilities.

>Nvidia guide: https://rentry.org/voldy

>CPU guide: https://rentry.org/cputard

>AMD guide: https://rentry.org/sdamd

(think this is still the relevant guides)

Can my hp laptop run Crysis Stable Diffusion?

Asking for a poorfag fren that might want to generate mares.

Asking for a poorfag fren that might want to generate mares.

Anonymous

No.2297

>>2296

I think if you run the CPU version it could work. Not sure though as I haven't tried the CPU one, but it might be worth checking out. If it has an Nvidia GPU with 2GB or more vram it should run according to the guide without problem.

I think if you run the CPU version it could work. Not sure though as I haven't tried the CPU one, but it might be worth checking out. If it has an Nvidia GPU with 2GB or more vram it should run according to the guide without problem.

Isn't there a command you can add to the .bat file to make it generate images slower while using less memory at once?

Anonymous

No.2299

>>2298

Yes, the "--lowvram --opt-split-attention" in the COMMANDLINE_ARGS is the command line args for lowram systems. The "--ckpt " is to select what model it uses (the pony model in this example)

>set COMMANDLINE_ARGS=--ckpt pony_sfw_80k_safe_and_suggestive_500rating_plus-pruned.ckpt --lowvram --opt-split-attention

Yes, the "--lowvram --opt-split-attention" in the COMMANDLINE_ARGS is the command line args for lowram systems. The "--ckpt " is to select what model it uses (the pony model in this example)

>set COMMANDLINE_ARGS=--ckpt pony_sfw_80k_safe_and_suggestive_500rating_plus-pruned.ckpt --lowvram --opt-split-attention

Wait do you mark a word or phrase with (((these))) or !!!these!!! to increase or decrease the importance the AI gives to those words in the request?

Anonymous

No.2301

>>2300

Yes you have a few weighting and other tricks to do in prompt. Cant remember them all or find the guide that had it all listed but I guess it is in here somewhere https://rentry.org/sdgoldmine

(((increased importance))) - the more paratheses the more importance it should be given

Yes you have a few weighting and other tricks to do in prompt. Cant remember them all or find the guide that had it all listed but I guess it is in here somewhere https://rentry.org/sdgoldmine

(((increased importance))) - the more paratheses the more importance it should be given

Anonymous

No.2303

Anonymous

No.2309

Anonymous

No.2311

>>2307

>pic 3

The AI reinterpreted two hoes into one waifu, but one of the hoes is fat enough to be two women.

>pic 3

The AI reinterpreted two hoes into one waifu, but one of the hoes is fat enough to be two women.

Anonymous

No.2312

>>2302

Love the AI renderings. Looking forward to getting "colorized" videos in AI rendering. So much good too look forward to.

Love the AI renderings. Looking forward to getting "colorized" videos in AI rendering. So much good too look forward to.

Anonymous

No.2313

Has anyone by the way run any of the CWC comics through any of the AI's yet?

>>2320

There is an NSFW model but can't find link to it . I assume that is the one being used

...Found the link

>https://desuarchive.org/mlp/thread/39110334/#39114433

>>NSFW Pony Model Weights.

>>https://drive.google.com/drive/folders/14JyQE36wYABH-0TSV_HBEsBJ3r8ZITrS?usp=sharing

There is an NSFW model but can't find link to it . I assume that is the one being used

...Found the link

>https://desuarchive.org/mlp/thread/39110334/#39114433

>>NSFW Pony Model Weights.

>>https://drive.google.com/drive/folders/14JyQE36wYABH-0TSV_HBEsBJ3r8ZITrS?usp=sharing

why

Just updated my Stable Diffusion install, I see new buttons and features but the limit on prompts is still there.

How do I get rid of that text limit?

How do I get rid of that text limit?

>>2327

There's a limit on the number of prompts I can give the AI at once. It's around 75 words. How do I break this limit on input text?

There's a limit on the number of prompts I can give the AI at once. It's around 75 words. How do I break this limit on input text?

>>2329

Not sure, but have you tested if the limit is on number of keywords individual words or if it is on prompt sections.

Like:

>word1, word2, word3, ...

>full sentence 1, full sentence 2, ....

...

>pony, flower, lake

>pony standing in field by a lake surrounded by flowers, stars, moon, (unicorn), by Peter Elson

(I have not run these examples so I have no idea what they will produce)

Only limit I can see is on full input, but I think this is a limit in Stable Diffusion that the webui can't circumvent. But could be that there is a limit on the number of "sections" i.e. keywords, keysentences.

Not sure, but have you tested if the limit is on number of keywords individual words or if it is on prompt sections.

Like:

>word1, word2, word3, ...

>full sentence 1, full sentence 2, ....

...

>pony, flower, lake

>pony standing in field by a lake surrounded by flowers, stars, moon, (unicorn), by Peter Elson

(I have not run these examples so I have no idea what they will produce)

Only limit I can see is on full input, but I think this is a limit in Stable Diffusion that the webui can't circumvent. But could be that there is a limit on the number of "sections" i.e. keywords, keysentences.

Anonymous

No.2331

>request man in blue jacket with white flames

Wow, whoever made this AI model loves men.

Wow, whoever made this AI model loves men.

Anonymous

No.2332

>>2330

Also I updated my Stable Diffusion installation after wiping my PC. Now the text limit gets slightly higher whenever I go over the limit. Seems to be missing key words now and then, unless I'm using the wrong terms for some things like pony_ears.

Also I updated my Stable Diffusion installation after wiping my PC. Now the text limit gets slightly higher whenever I go over the limit. Seems to be missing key words now and then, unless I'm using the wrong terms for some things like pony_ears.

Anonymous

No.2335

>>2334

Does putting Text in the negative prompt get rid of that watermark?

What about mixing the model with other models?

Does putting Text in the negative prompt get rid of that watermark?

What about mixing the model with other models?

Anonymous

No.2336

Art Subreddit Bans Guy Because His Work “Looks Like It was AI-Generated”

https://nichegamer.com/art-subreddit-bans-artist-style-ai/

https://nichegamer.com/art-subreddit-bans-artist-style-ai/

Anonymous

No.2337

Who are your favourite artists to use for art generation?

>type "horse vagina" over and over

>get this

why

>get this

why

>>2342

I thought combining AI models would make it better at producing horse pussy, not worse. What the hell is this?

I thought combining AI models would make it better at producing horse pussy, not worse. What the hell is this?

Anonymous

No.2345

>>2344

When I wrote

>blonde

no blonde hair

when I wrote (((((blonde))))) to emphasize the tag above all others I got that weird yellow pic.

When I wrote

>blonde

no blonde hair

when I wrote (((((blonde))))) to emphasize the tag above all others I got that weird yellow pic.

Rainbow Dash

I'm bored now. The art is coming out crappy. I don't think I'll do the rest of the mane six.

I'm bored now. The art is coming out crappy. I don't think I'll do the rest of the mane six.

>>2348

I don't remember if I combined those with the other models or not but I'm sure Everything V3 and an anime waifu model are in there.

The mixed model can't make good porn.

I don't remember if I combined those with the other models or not but I'm sure Everything V3 and an anime waifu model are in there.

The mixed model can't make good porn.

>>2349

Just wondered as the rendering looked a bit like the ones I got when I tried to generate MLP using Waifu model.

>pic 1 Waifu Model (v1.2)

>pic 2 Pony Model

>twilight_sparkle, mlp, seductive

>Steps: 20, Sampler: Euler a, CFG scale: 7, Seed: 3967284458, Size: 512x512

>Not the best seed I guess

Just wondered as the rendering looked a bit like the ones I got when I tried to generate MLP using Waifu model.

>pic 1 Waifu Model (v1.2)

>pic 2 Pony Model

>twilight_sparkle, mlp, seductive

>Steps: 20, Sampler: Euler a, CFG scale: 7, Seed: 3967284458, Size: 512x512

>Not the best seed I guess

Anonymous

No.2372

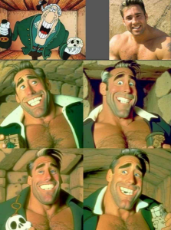

>Deepfakes: Faces Created by AI Now Look More Real Than Genuine Photos

>Spoiler alert:

>The single real face in the composite image above is located in the second column from the left, fourth image from the top.

https://www.activistpost.com/2023/01/deepfakes-faces-created-by-ai-now-look-more-real-than-genuine-photos.html

>Spoiler alert:

>The single real face in the composite image above is located in the second column from the left, fourth image from the top.

https://www.activistpost.com/2023/01/deepfakes-faces-created-by-ai-now-look-more-real-than-genuine-photos.html

Anonymous

No.2389

So I tried generating something with the (((((arcane style))))) tag and got this.

Turns out if you overrate Arcane's importance you get weird satanic looking shit.

Turns out if you overrate Arcane's importance you get weird satanic looking shit.

Anonymous

No.2393

>AI images lose copyright protections

>The decision by the US Copyright Office is among the first to deal with AI-generated artwork and intellectual property

>A US federal agency has concluded that artwork made using artificial intelligence does not qualify for copyright protections, saying such images were not created by a human being and cannot be registered as legitimate IP.

>The US Copyright Office outlined its stance in a recent letter to graphic novelist Kris Kashtanova, who attempted to register a work containing images created with the help of the ‘Midjourney’ AI software. The office would only agree to copyright elements written and arranged by a human author.

https://www.rt.com/news/571952-artificial-intelligence-art-copyright/

>The decision by the US Copyright Office is among the first to deal with AI-generated artwork and intellectual property

>A US federal agency has concluded that artwork made using artificial intelligence does not qualify for copyright protections, saying such images were not created by a human being and cannot be registered as legitimate IP.

>The US Copyright Office outlined its stance in a recent letter to graphic novelist Kris Kashtanova, who attempted to register a work containing images created with the help of the ‘Midjourney’ AI software. The office would only agree to copyright elements written and arranged by a human author.

https://www.rt.com/news/571952-artificial-intelligence-art-copyright/

>>2447

This is interesting. I wonder what it means for the industry as a whole, particularly for artist who only partly use AI tools in their art process.

This is interesting. I wonder what it means for the industry as a whole, particularly for artist who only partly use AI tools in their art process.

Anonymous

No.2484

>>2481

I believe that from the very moment they use it, their human intellectual property goes in flames.

I believe that from the very moment they use it, their human intellectual property goes in flames.

Anonymous

No.2510

>Last Stand | Sci-Fi Short Film Made with Artificial Intelligence - (9:59 long)

>Disclaimed: None of it is real. It’s just a movie, made mostly with AI, which took care of writing the script, creating the concept art, generating all the voices, and participating in some creative decisions. The AI-generated voices used in this film do not reflect the opinions and thoughts of their original owners. This short film was created as a demonstration to showcase the potential of AI in filmmaking.

[YouTube] Last Stand | Sci-Fi Short Film Made with Artificial Intelligence![]()

>Disclaimed: None of it is real. It’s just a movie, made mostly with AI, which took care of writing the script, creating the concept art, generating all the voices, and participating in some creative decisions. The AI-generated voices used in this film do not reflect the opinions and thoughts of their original owners. This short film was created as a demonstration to showcase the potential of AI in filmmaking.

[YouTube] Last Stand | Sci-Fi Short Film Made with Artificial Intelligence

Anonymous

No.3204

1704664346.mp4 (4.1 MB, Resolution:1080x1920 Length:00:00:46, you.are.banned-20240104-0001.mp4) [play once] [loop]

Anonymous

No.3331

>Jonathan Blow on AI art and tech

[YouTube] Jonathan Blow on AI art and tech![]()

In a nutshell: "Mediocre artists will loose their job, and the worthy will remain".

[YouTube] Jonathan Blow on AI art and tech

In a nutshell: "Mediocre artists will loose their job, and the worthy will remain".

266 replies and 234 files omitted.

[View All] [Last 50 Posts] [Last 100 Posts]Post pagination: [Prev] [1-200] [201-400] [401-466] [Next]

466 replies | 449 files | 74 UUIDs | Page 4

[Add to Thread Watcher]

Ex: Type :littlepip: to add Littlepip

Ex: Type :littlepip: to add Littlepip  Ex: Type :eqg-rarity: to add EqG Rarity

Ex: Type :eqg-rarity: to add EqG Rarity